MORPH: Design Co-optimization with Reinforcement Learning via a Differentiable Hardware Model Proxy

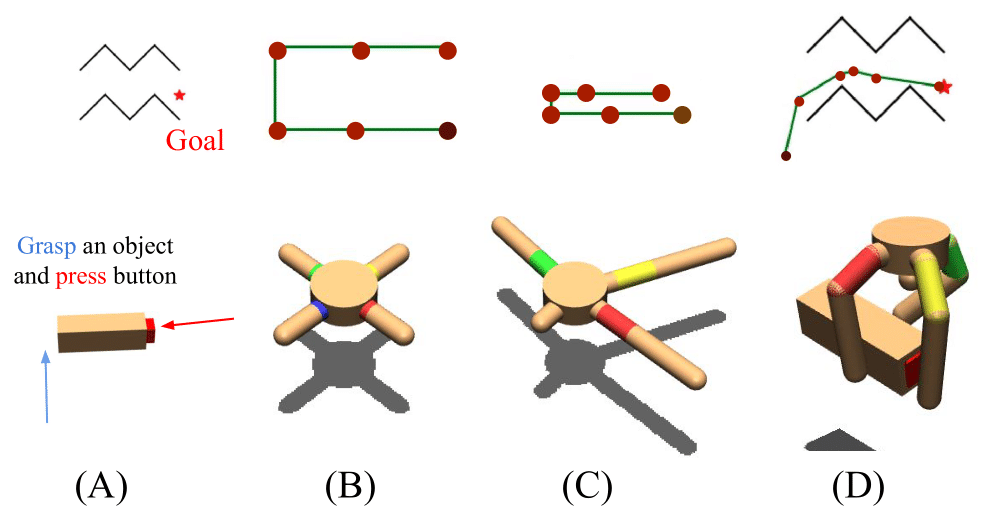

We introduce MORPH, a method for co-optimization of hardware design parameters and control policies in simulation using reinforcement learning. Like most co-optimization methods, MORPH relies on a model of the hardware being optimized, usually simulated based on the laws of physics. However, such a model is often difficult to integrate into an effective optimization routine. To address this, we introduce a proxy hardware model, which is always differentiable and enables efficient co-optimization alongside a long-horizon control policy using RL. MORPH is designed to ensure that the optimized hardware proxy remains as close as possible to its realistic counterpart, while still enabling task completion. We demonstrate our approach on simulated 2D reaching and 3D multi-fingered manipulation tasks.

Paper

Latest version: arxiv

Supplementary Video

Team

Related works (MORPH on real robots)

TentaMorph: Task-based Design and Policy Co-Optimization for Tendon-driven Underactuated Kinematic ChainsBibTeX

@article{he202morph,

title={MORPH: Design Co-optimization with Reinforcement Learning via a Differentiable Hardware Model Proxy},

author={Zhanpeng, He and Matei, Ciocarlie},

journal={IEEE Robotics and Automation},

year={2024},

publisher={IEEE}

}Contact

If you have any questions, please feel free to contact Zhanpeng He